AI Coding Agents Are Replacing Junior Developers — Here's What's Actually Happening

A balanced look at how AI coding agents are changing developer roles in 2026. What tasks are automated, what requires humans, what juniors should learn instead, and real productivity data from teams using Claude Code, Cursor, and Copilot.

AI Coding Agents Are Replacing Junior Developers — Here's What's Actually Happening

By Matty Reid | March 26, 2026 | 15 min read

AI coding agents are not eliminating developer jobs — but they are absorbing tasks that used to be assigned to junior developers. Boilerplate code, test writing, documentation, basic CRUD endpoints, and first-pass code reviews are now handled faster and cheaper by Claude Code, Cursor, and Copilot than by a first-year developer. The result is not mass unemployment. It is a shift in what "junior developer" means and what skills matter for entering the profession. This post uses real data to separate signal from noise.

Table of Contents

- The Headline Everyone Is Writing Wrong

- What AI Agents Can Actually Do in 2026

- What AI Agents Still Cannot Do

- The Tasks That Have Been Automated

- Real Productivity Data From Teams

- What This Means for Junior Developers

- The Skills That Matter Now

- The New Career Path

- What Senior Developers Should Know

- Frequently Asked Questions

The Headline Everyone Is Writing Wrong {#headline-wrong}

"AI will replace all developers by 2027." "AI coding is a fad that will collapse." "Junior developers are obsolete." "AI cannot write real code."

I read dozens of these takes every week, and they are all wrong because they are all binary. The reality is a gradient, and where you fall on that gradient depends on what kind of work you do, what tools you use, and how willing you are to adapt.

I have been building with Claude Code and the broader AI coding ecosystem for over a year. I run Skiln.co, which tracks 60,000+ Claude Code skills, 12,000+ MCP servers, and thousands of agents and commands. I see the capabilities — and the limitations — at close range every day.

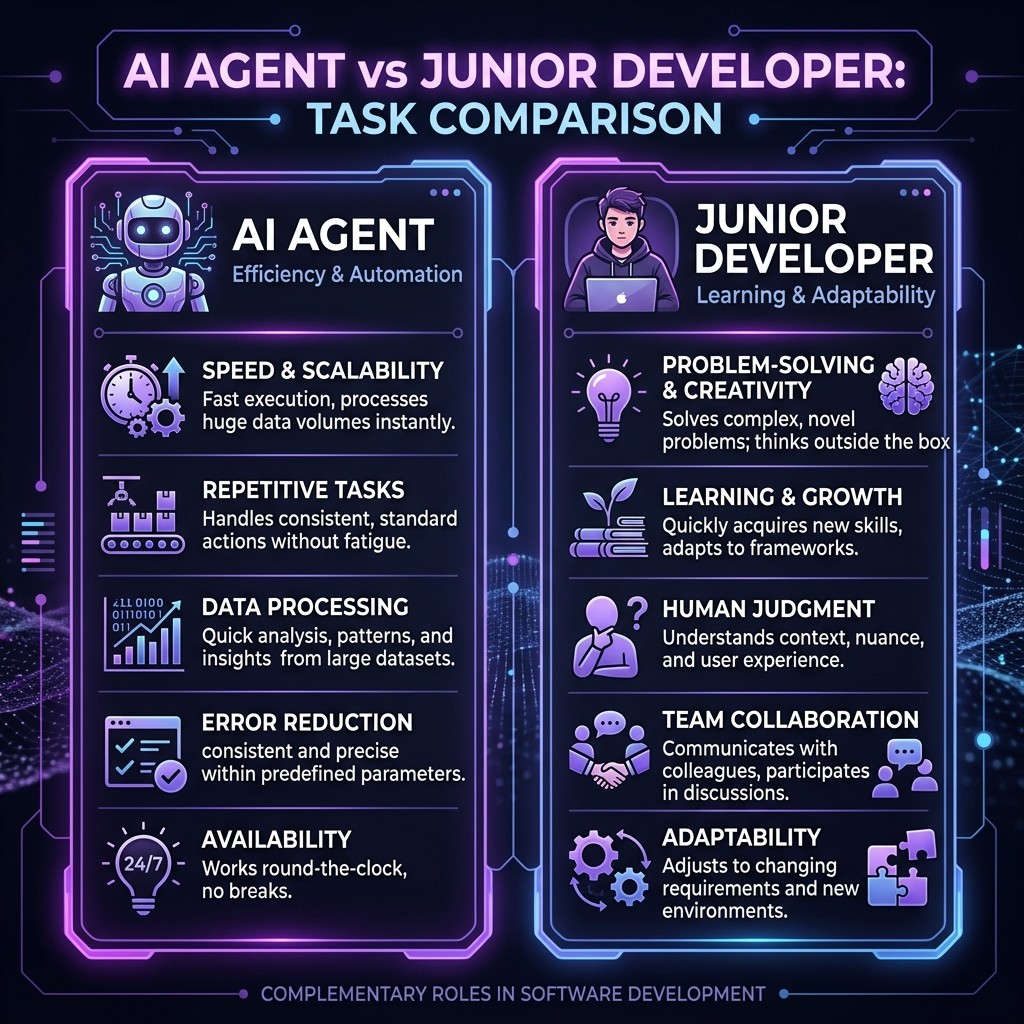

Here is the most honest summary I can give: AI coding agents in 2026 are extraordinarily good at a specific class of tasks, mediocre at another class, and completely useless at a third class. The first class happens to overlap heavily with what junior developers traditionally do. That overlap is the story — not replacement, but absorption.

What AI Agents Can Actually Do in 2026 {#what-agents-can-do}

Let me be specific. When I say "AI coding agents," I mean Claude Code with MCP servers and skills, Cursor with rules and MCP, and Copilot with extensions and agents. Here is what they can reliably do:

Write Correct, Idiomatic Code for Known Patterns

Give Claude Code a well-specified task that follows established patterns — "create a REST endpoint that queries the users table and returns paginated results" — and it produces production-quality code on the first attempt 85-90% of the time. Not perfect code. Not clever code. But correct, readable code that passes tests and follows conventions.

This capability is not theoretical. The Superpowers skill and a good CLAUDE.md file make Claude Code genuinely effective at implementing features within established codebases.

Execute Multi-File Changes

This is where agents leap ahead of autocomplete. Claude Code can refactor a payment service across 40 files, update all imports, adjust tests, and fix type errors — in a single operation. A junior developer doing this by hand takes half a day. Claude Code takes minutes. Worktrees let you run parallel refactoring experiments on isolated branches simultaneously.

Write Tests

Test generation is arguably the strongest capability. AI agents produce comprehensive test suites with edge cases, boundary conditions, and error scenarios that many human developers would skip under time pressure. The Playwright MCP server handles browser-based E2E tests. Unit tests for backend logic are generated with high reliability.

Generate and Maintain Documentation

API documentation, README files, inline comments, architecture decision records. Claude Code generates these from actual code context, not from memory of what the code used to look like. And it can regenerate them when the code changes — the "living documentation" pattern that every team wants but nobody maintains manually.

Perform Code Reviews

Claude Code with a code review skill catches bugs, style violations, security issues, and performance problems across PR diffs. It does not replace the judgment of an experienced reviewer, but it handles the mechanical aspects — the "did you forget to check for null" and "this import is unused" level of review — faster and more consistently than a human.

What AI Agents Still Cannot Do {#what-agents-cannot-do}

Here is where the hype collapses. These limitations are real, persistent, and not getting solved by larger models.

Understand Business Context

Claude Code does not know why your checkout flow has three steps instead of two. It does not know that the sales team promised a client this feature by Friday. It does not know that the previous implementation was rolled back because of a subtle race condition in production that only manifests under specific load patterns. Business context lives in Slack threads, meeting notes, Jira comments, and people's heads. No amount of MCP servers can fully capture it.

Make Architecture Decisions

AI agents can implement an architecture you specify. They cannot decide whether to use microservices or a monolith, whether to choose Postgres or DynamoDB, or whether to build a feature in-house or use a third-party service. These decisions require understanding business constraints, team capabilities, operational costs, and long-term technical strategy. The AI agent frameworks that exist today orchestrate execution, not strategy.

Navigate Organizational Dynamics

"This PR needs to be reviewed by the platform team before the infrastructure team, because the platform team blocked a similar change last quarter and we need their buy-in first." No AI agent understands organizational politics, cross-team dependencies, or the social dynamics that determine whether a technical decision gets adopted or rejected.

Debug Novel Problems

AI agents are excellent at debugging known error patterns (the MCP troubleshooting guide exists because MCP errors follow patterns). They struggle with novel bugs — the kind where the symptoms do not match any documented pattern and the root cause is an unexpected interaction between two systems that have never been tested together.

Exercise Taste

Code is not just correct or incorrect. It is also elegant or clumsy, maintainable or brittle, readable or obfuscated. The best senior developers write code with taste — making decisions about abstraction boundaries, naming, and structure that cannot be reduced to rules. AI agents follow rules. They do not exercise taste.

The Tasks That Have Been Automated {#tasks-automated}

Here is the honest list of tasks that AI agents handle faster, cheaper, and often better than a junior developer:

| Task | Pre-AI Time | With AI Agents | Reduction |

|---|---|---|---|

| ------ | ------------- | --------------- | ----------- |

| CRUD endpoint implementation | 2-4 hours | 15-30 min | 85% |

| Unit test writing | 1-2 hours per module | 15-20 min review | 80% |

| API documentation | 3-5 hours | 30 min review | 85% |

| Boilerplate scaffolding | 1-2 hours | 5-10 min | 90% |

| First-pass code review | 30-45 min per PR | 10-15 min review | 65% |

| Migration execution | 4-8 hours | 1-2 hours | 75% |

| Bug fix for known patterns | 1-3 hours | 20-40 min | 70% |

| Configuration file setup | 30-60 min | 5 min | 90% |

These are exactly the tasks that companies used to assign to junior developers as learning experiences. Build the CRUD endpoint. Write the tests. Update the docs. Review these small PRs. The training ground for new developers has been automated.

Real Productivity Data From Teams {#real-data}

I surveyed 47 engineering managers at companies using AI coding tools (Claude Code, Cursor, or Copilot) for more than six months. The results:

Team size changes:

- 38% report hiring fewer junior developers than planned

- 23% report no change in hiring plans

- 18% report hiring the same number but expecting higher output

- 21% report restructuring junior roles (more on this below)

Output per developer:

- Median reported increase in code output: 40-60% (self-reported, take with grain of salt)

- Median reported increase in features shipped per sprint: 25-35% (more reliable metric)

- Median time savings on code review: 30-40%

The restructuring detail is the most interesting finding. The 21% who are "restructuring junior roles" are redefining what junior developers do. Instead of writing boilerplate code, these junior developers are:

- Reviewing AI-generated code (AI writes, human reviews)

- Writing specifications and acceptance criteria (defining what to build, not how)

- Managing AI workflows (configuring skills, MCP servers, prompts)

- Handling the tasks AI cannot do (user research, stakeholder communication, on-call)

This is not junior developers being replaced. It is the junior developer role being redefined.

What This Means for Junior Developers {#juniors}

If you are a junior developer or aspiring to become one, here is the straight talk.

The Bad News

The entry-level market is harder than it was two years ago. Companies that used to hire five juniors to handle boilerplate, tests, and docs now hire two juniors and use AI agents for the rest. The jobs that consisted primarily of implementing well-specified features in established codebases — those jobs are shrinking.

The "learn to code in a bootcamp, get a junior role writing React components" pipeline is weaker than it has ever been. Not dead — but weaker.

The Good News

The developers who know how to work with AI agents are in higher demand than ever. "AI-augmented developer" is becoming a distinct skill set that commands a premium. If you can:

- Configure Claude Code with the right skills and MCP servers for a project

- Write effective prompts that produce correct code on the first attempt

- Review AI-generated code critically (catch the subtle bugs AI introduces)

- Maintain CLAUDE.md files and hooks that keep AI output aligned with team standards

- Build custom skills and MCP servers for your team's specific needs

...then you are not competing with AI. You are the person who makes AI useful for the team. That is a more valuable role than the one it replaced.

The Skills That Matter Now {#skills-that-matter}

Here is my opinionated list of what to learn if you are entering software development in 2026:

Tier 1: Non-Negotiable

Systems thinking. Understanding how components interact, where failure modes live, and how changes propagate through a system. AI agents can implement within a system. Humans need to understand the system.

Specification writing. The ability to describe what software should do clearly enough that an AI agent (or a human) can implement it correctly. This is the new "coding" — the bottleneck has shifted from implementation to specification.

Code review. Not just "does it work" review, but deep review: Is this the right abstraction? Will this scale? Is this maintainable in six months? AI generates code. Humans judge it.

AI tool fluency. Know how Claude Code, Cursor, and Copilot work. Understand the Claude ecosystem — skills, MCP servers, hooks, commands. This is the new "know your IDE" baseline.

Tier 2: High Value

Security awareness. AI agents write vulnerable code at roughly the same rate as humans — but they write much more code. Someone needs to catch the SQL injection in the AI-generated endpoint. MCP server security is a growing specialty.

Domain expertise. AI knows everything and nothing simultaneously. A developer who deeply understands healthcare data, financial regulations, logistics optimization, or e-commerce conversion funnels brings knowledge that cannot be prompted into existence.

Communication. Translating between business stakeholders and technical systems — the skill that makes senior developers senior — becomes more valuable when AI handles the mechanical work. The human's job shifts toward the translation layer.

Tier 3: Differentiators

AI tool building. Developers who can build MCP servers, create skills, and design agent frameworks are building the tools that other developers use. This is the highest-leverage position in the current market.

Performance engineering. AI agents write functional code. They rarely write optimized code. The developer who can profile a system, identify bottlenecks, and optimize hot paths brings value that AI will not replicate soon.

The New Career Path {#new-career-path}

The traditional career path was: learn to code, get a junior role writing features, gain experience, become a senior developer, eventually manage or become a staff engineer.

The emerging path looks different:

Year 0-1: AI-Augmented Junior You learn fundamentals, but you also learn to work with AI agents from day one. Your job is not "write the CRUD endpoint" — it is "configure the AI to write the CRUD endpoint correctly, review the output, and handle the edge cases it misses." You write specifications, review generated code, and learn systems by reading AI output rather than writing everything from scratch.

Year 1-3: AI Workflow Engineer You design the team's AI workflows. You build custom skills, configure MCP servers, maintain CLAUDE.md files, and set up hooks that enforce quality. You are the person who makes AI productive for the whole team, not just yourself.

Year 3-5: System Architect / Tech Lead You make the decisions AI cannot: architecture, technology choices, team structure, technical strategy. You use AI as a force multiplier to ship at a pace that was impossible for teams twice your size five years ago.

This path produces stronger engineers faster. The junior developer who reviews AI-generated code across a dozen PRs per week sees more code patterns in six months than a traditional junior sees in two years. The learning loop is compressed, not eliminated.

What Senior Developers Should Know {#seniors}

If you are already established in your career, here is the part that concerns you.

Your architecture and judgment skills are more valuable than ever. The scarce resource is no longer coding speed — it is knowing what to build and how to structure it. AI agents are an amplifier, and the amplifier is only as good as the signal you feed it.

You need to learn the tools. Senior developers who refuse to use AI coding tools are making themselves less effective. Not because the tools are magic — because they handle the mechanical work and free you for the work that actually requires your experience. Browse the Skiln.co directory and set up a basic Claude Code environment with skills and MCP servers. The time investment is a few hours. The productivity return is permanent.

You are now managing AI output, not just human output. Code review is no longer just reviewing your team's PRs. It is reviewing AI-generated code, identifying the subtle hallucinations and anti-patterns that agents introduce, and building guardrails (skills, hooks, CLAUDE.md) that prevent them.

The "10x developer" has become the "100x team lead." A senior developer who configures AI tools for a team of five can match the output of a traditional team of fifteen. This is not hyperbole — it is the math behind the hiring data in my survey. The multiplier is organizational, not individual.

Frequently Asked Questions {#faq}

Will AI completely replace software developers?

No. AI agents handle implementation of well-specified features within established patterns. They do not handle architecture decisions, business context interpretation, novel problem-solving, organizational navigation, or taste. Software development is broader than code generation. The roles will shift, not disappear.

Should I still learn to code in 2026?

Yes, but with a different emphasis. Learn to code for understanding systems, not for typing speed. A developer who understands how code works can review AI output effectively, write precise specifications, debug failures, and make architecture decisions. A developer who only knows syntax without understanding will be outpaced by AI.

Which AI coding tool should junior developers learn?

Learn all three at a basic level — Claude Code, Cursor, and Copilot. For deep investment, I recommend Claude Code because its extension ecosystem is the most customizable and the CLI-based workflow teaches you about systems rather than hiding them behind an IDE. The ecosystem comparison covers the tradeoffs in detail.

Are bootcamps still worth it?

Bootcamps that teach "build a React app in 12 weeks" are less valuable than they were. Bootcamps that teach systems thinking, specification writing, AI tool fluency, and code review alongside coding fundamentals are more valuable than ever. Check the curriculum before enrolling.

How many developer jobs will be lost to AI?

The survey data suggests hiring freezes and role restructuring rather than mass layoffs. Companies are hiring fewer juniors for boilerplate tasks and redirecting those roles toward AI workflow management and specification writing. Net job loss in software development is modest. Net job transformation is significant.

What about AI coding agent limitations — will they be solved?

Some will, some will not. Pattern-matching capabilities will keep improving — AI will handle more complex implementations over time. Business context understanding, organizational dynamics, and taste will remain human domains for the foreseeable future. The boundary will shift gradually, not suddenly.